DOM Optimization

A large and inefficient webpage structure (Document Object Model, or DOM) can significantly slow down your website and negatively impact Core Web Vitals. Keeping it to a reasonable size and as efficient as possible is crucial for the overall technical performance of your project and will affect the speed of interaction responses (INP metric).

In this text, you can look forward to insights I've gleaned over many years as a consultant about the DOM. You'll discover why its overall size matters, how to easily measure it, and how to optimize HTML structure for faster rendering.

Size Matters

The DOM, a tree-structured collection of components, tends to grow quickly in the real life of a website. Adding elements and nesting them in HTML is straightforward, thus requiring conscious restraint.

A DOM is considered too large if it has many elements or deep nesting. Google recommends a maximum of 1,400 elements.

This is rather strict, especially for larger sites like e-commerce or applications. From our experience, browsers can handle 2,500 DOM elements quite briskly.

Once the number of DOM elements surpasses this threshold, complications arise swiftly. Naturally, the smaller and more efficient it is, the better.

Why Must the DOM Be Efficient?

It’s important to realize that HTML is initially just a string of structured text prepared by the browser. Ideally, it is assembled on the server, then downloaded to the browser where it is converted into a dynamic tree structure. This process is known as "parsing".

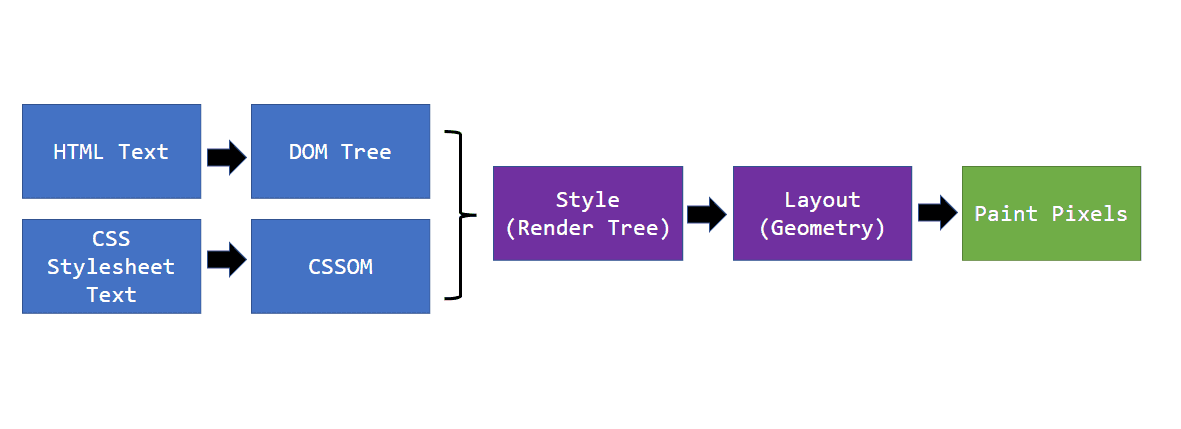

Diagram of the rendering process. HTML is parsed into DOM. After combining with CSS, it moves to layout calculations and screen drawing.

Diagram of the rendering process. HTML is parsed into DOM. After combining with CSS, it moves to layout calculations and screen drawing.

But that's not all. DOM assembly occurs at the beginning of the rendering process. The rendering process has several steps, and inefficiencies in the DOM manifest negatively throughout.

-

On the server

The more complex the DOM, the more data and database queries. HTML will take longer to assemble, slowing down the TTFB metric. -

Transferring HTML to the browser

More data will take longer to transfer over the network, slowing down metrics like FCP or LCP, that is, loading speed. -

Parsing the HTML string and assembling the DOM

More elements mean the browser takes longer to convert them into a tree structure. -

Applying styles and layout calculation

CSS selectors will apply to more elements, lengthening the layout calculation. -

Rendering to the screen

This phase is quite well optimized by browsers, but even here, a large DOM can cause issues, depending on how CSS is handled. -

During every interaction

The DOM is dynamic and responds to user inputs and JavaScript. A large DOM takes more time to reflect changes on the screen.

An efficient DOM reduces the burden on the entire rendering process and additionally saves on server side.

Info: Understanding rendering is crucial for a fast website. In our workshops, we’ll show you how this fascinating mechanism works, step by step.

How to Test DOM Complexity?

There are several ways to find out how your DOM measures up:

Lighthouse report

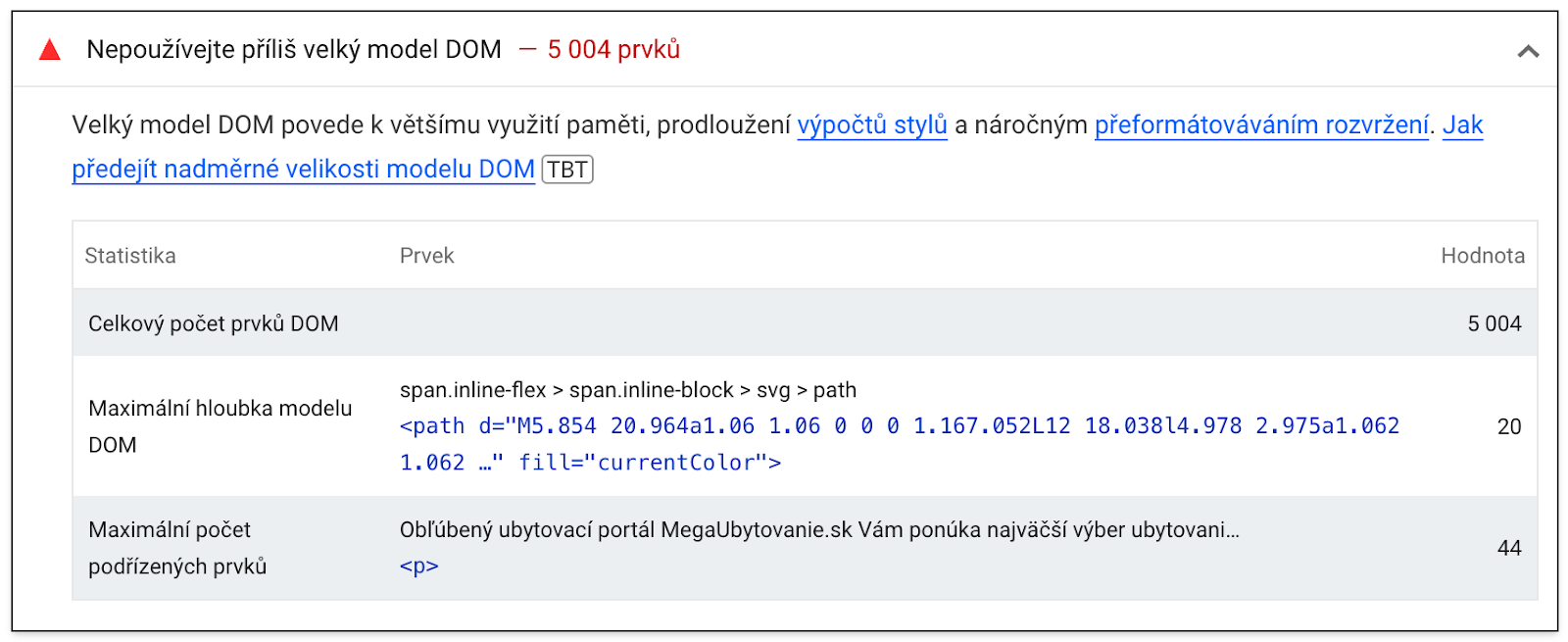

One of the reports from the Lighthouse tool provides the total number of DOM elements on the page, the maximum DOM depth, and the maximum number of nested elements.

The output of the Lighthouse tool can be seen in our test report detail.

The output of the Lighthouse tool can be seen in our test report detail.

DevTools console in the browser

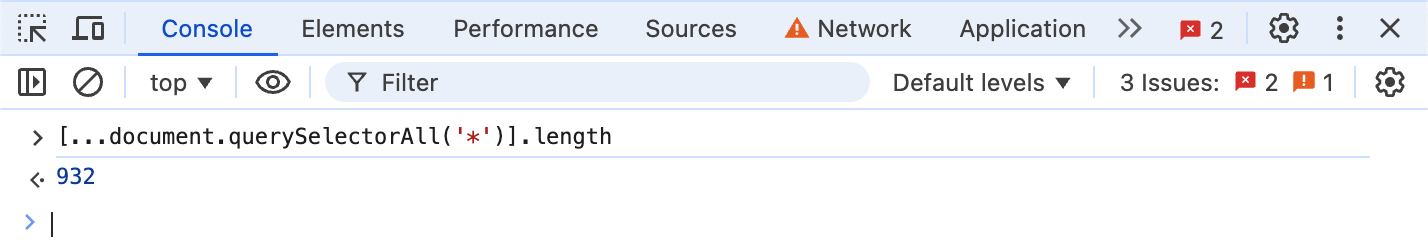

Another way is the DevTools console. When the page is loaded, just run the following snippet of code.

[...document.querySelectorAll('*')].length;

In Google Chrome, it looks like this:

The image shows the result of the script call. There are 932 elements on the page.

The image shows the result of the script call. There are 932 elements on the page.

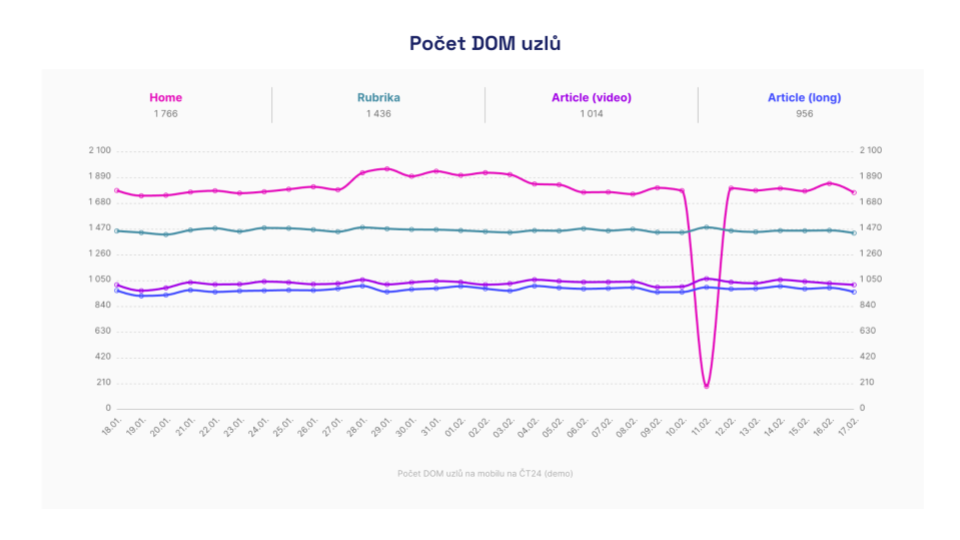

Number of DOM elements in the "Technical" report

In our monitoring PLUS, we collect the number of DOM elements for each measured page. The graph then shows how the website evolved over time.

Graph from the Technical report, showing the evolution of DOM element count over time. It also shows how monitoring detects an error on the homepage where content was not rendered correctly.

Graph from the Technical report, showing the evolution of DOM element count over time. It also shows how monitoring detects an error on the homepage where content was not rendered correctly.

DOM Optimization

Our recommendations will change your paradigm on how you view HTML and DOM structure today. You might initially find them somewhat shocking. When it comes down to it, the DOM doesn't have to be large, even on really complex pages.

Delete Everything Unnecessary in Code on Load

The most efficient approach is to delete and lazy-load everything that doesn't need to be in the code. How to know what to delete? Simply ask yourself these questions:

-

Does the component have any significant informational value?

Robots can't work with some components at all, such as forms and filters. Therefore, they don't need to be in the default code in their entirety. -

Is it purely a visual element?

Visual elements must be explained in code textually for machine informational value. Examples include dynamic charts, maps. -

How much added value does the component have for the main content?

We often clutter websites with various supplementary information. Examples include chats, side contact boxes, and often entire sidebars or footers. These often don't need to be in the default DOM. -

Is it specific to just one particular user?

Removing such content significantly increases cacheability. Examples include user profile boxes and carts, last visited products. -

Is content duplicated in any component?

Such components unnecessarily inflate the DOM. Technically, duplication was once the only correct option for coders. This is no longer true with modern CSS. Alternatively, duplicated components can be generated and rendered by JavaScript when needed. A typical example is the main navigation, often coded twice, once for mobile and once for desktop.

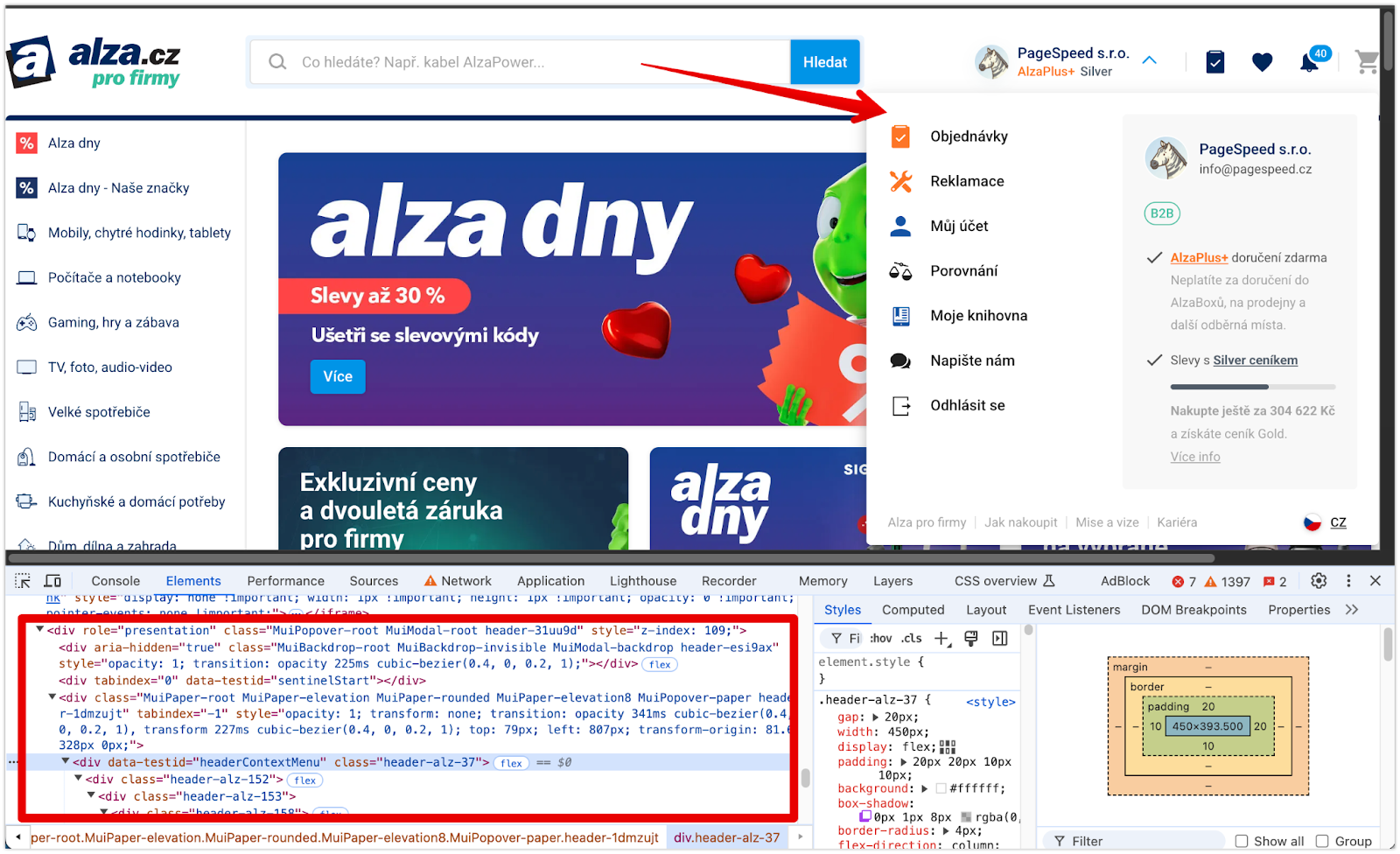

Example of a correct solution on alza.cz. The user's menu appears in the DOM only after clicking on the dropdown. When closed, it is removed again.

Example of a correct solution on alza.cz. The user's menu appears in the DOM only after clicking on the dropdown. When closed, it is removed again.

Simplify Components Until They're Visible

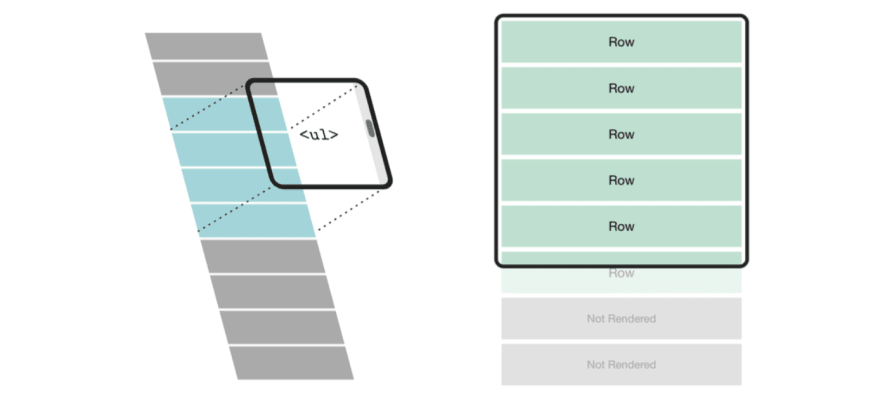

Even if a component or its content is important for SEO or accessibility, it doesn't mean it has to be in full visual quality at first rendering. Especially if the component is not visible in the initial viewport.

How many components will the user actually see? Some are hidden behind interaction, like megamenus, while others are seen after scrolling. Does every component really need to be in the HTML in its final form?

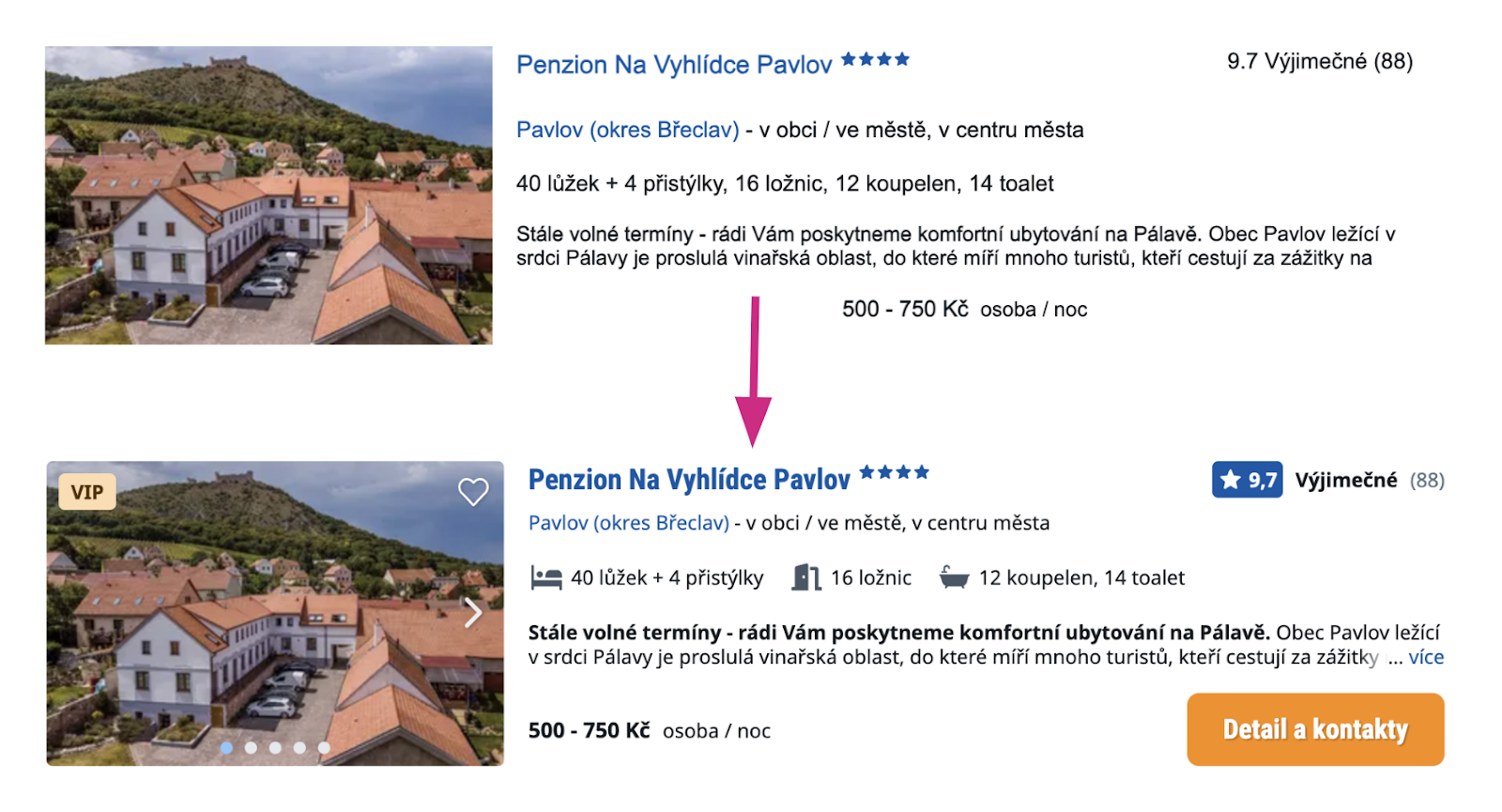

Optimizing by breaking down into simple and rich components is especially effective for elements that repeat multiple times on a page. These are typically directory pages, product listings, or other offerings, as seen in the image:

The following code example illustrates how to load a richer version of a component using the Intersection Observer:

import React from 'react';

import { useInView } from 'react-intersection-observer';

const Offer = ({ images, title }) => {

const { ref, inView, entry } = useInView();

return (

<article className="offer" ref={ref}>

<div className="gallery">

{!inView ? <Image data={images[0]} /> : <ImagesCarousel data={images} />}

<h3>{title}</h3>

</div>

</article>

);

};

Corresponding with important content and resulting HTML, you’ll find that the DOM skeleton can be quite simple. Visual richness can be added on the frontend during the user's visit.

Optimize Long Lists and Tables

Browsers will always take a long time to render long lists or large tables. The content must be kept to a reasonable length. A list with 100 products benefits no one.

Content must always be paginated, and if you want to use infinite scrolling, reuse DOM elements through virtual scrolling or remove already invisible items from the DOM while reserving space for them.

Simplify Component Structure

UI often involves creating numerous components. With today's modern HTML and CSS capabilities, we need fewer and fewer wrapping elements that only serve a layout role.

A typical example of waste is star ratings with unnecessary DOM expansion through separate "stars":

// Bad

<StarRating>

<SVGStar />

<SVGStar />

<SVGStar />

<SVGStar />

<SVGStar />

</StarRating>

Such a thing could instead be solved with a single element, setting width and repeating background.

DOM Optimization: Practical Examples

As speed consultants, we have successfully optimized many DOMs.

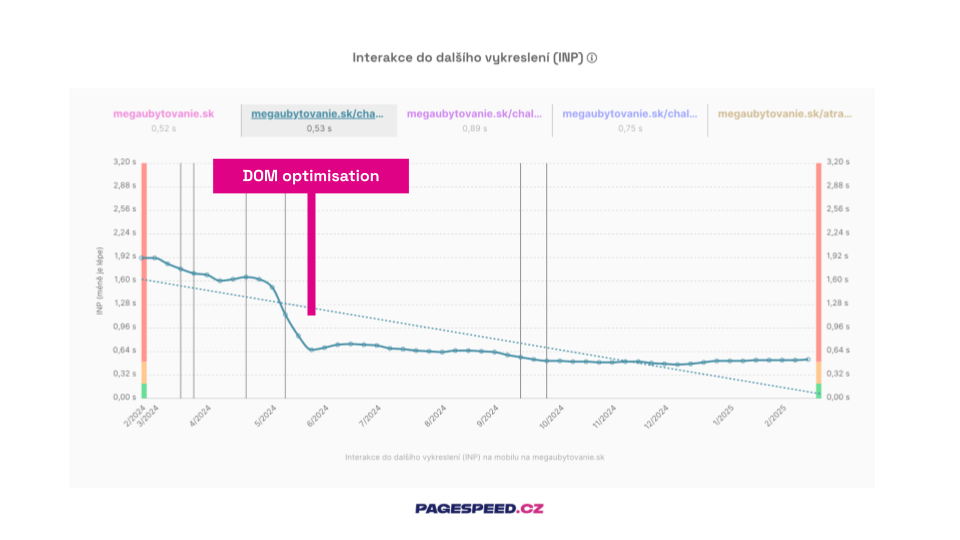

DOM optimization is possible on almost any project, as it's often not under direct scrutiny. Let's look at two optimizations that significantly helped and positively impacted the INP metric.

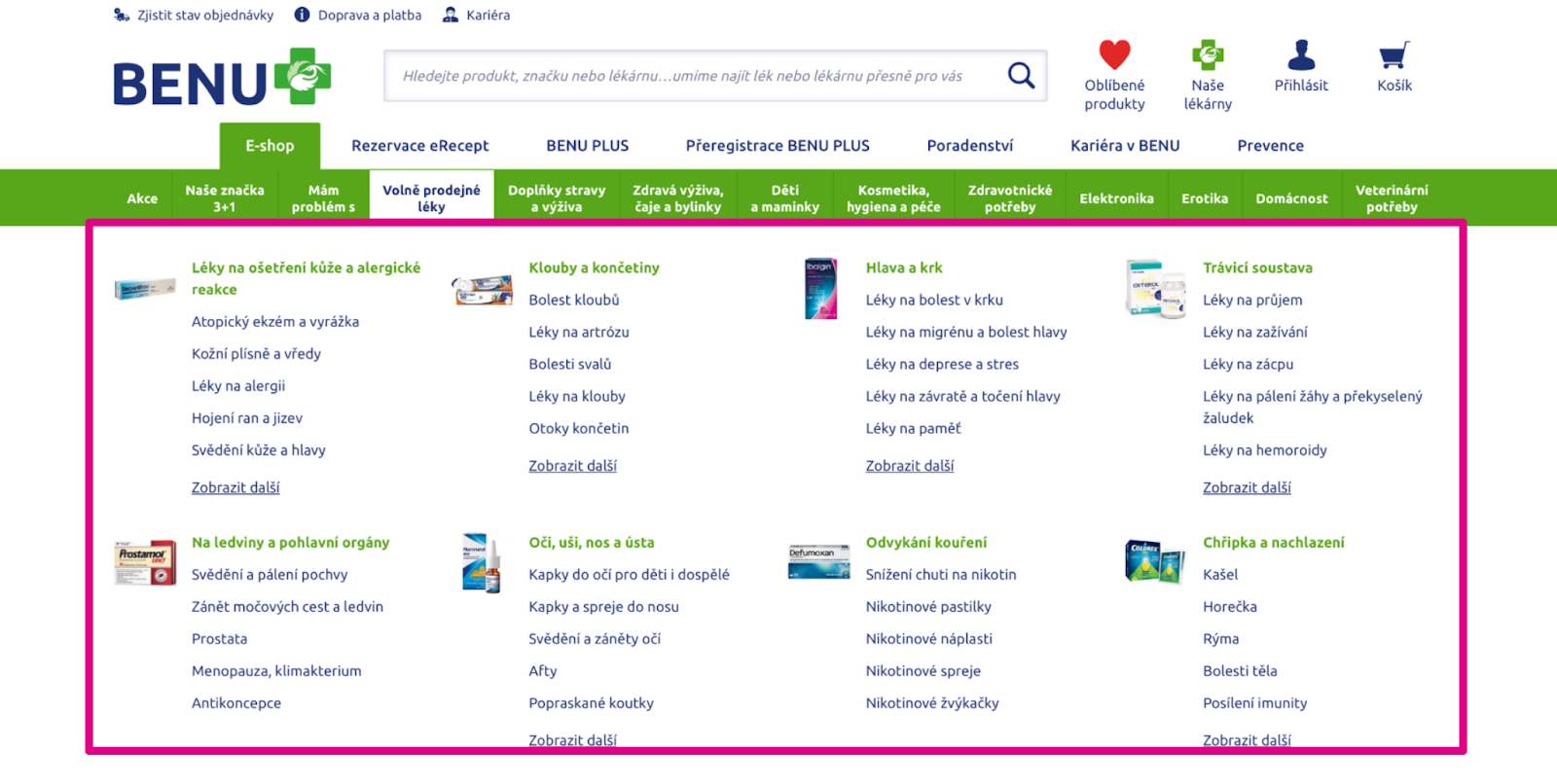

At Benu.cz, after consultations with SEO experts, we embarked on optimizing the megamenu, which had nearly 3,000 elements on each page.

Our optimization focused on reducing nested subcategories. Less important categories are lazy-loaded as needed by the user.

What do you see in the image?

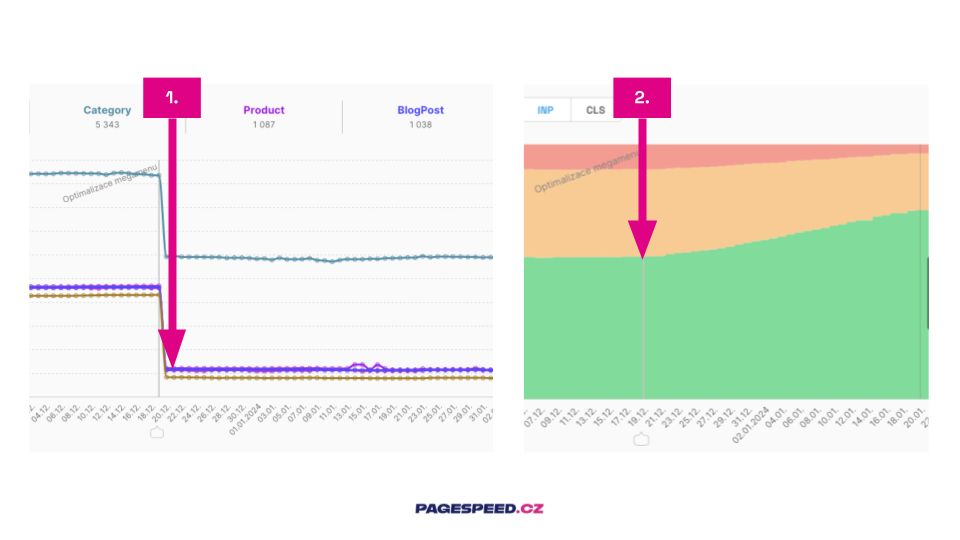

- The impact of optimization visible on specific pages.

- The whole domain shows changes in INP metric distribution since the optimization was deployed.

Placeholder Simplified Components

Megaubytovanie.sk is a content-rich project. It’s like a "Czechoslovakian Booking". The site is built on the React framework and contains a large number of directory blocks with offers.

We proposed using simplified components that provide only SEO-important data upon page load. This state is visually hidden from the user. The "rich variant" activates when the component enters the viewport.

Impact of DOM optimization on the INP metric for the page with offer listings.

Impact of DOM optimization on the INP metric for the page with offer listings.

Beware of CLS

When optimizing the DOM, ensure layout stability so that acceleration in rendering doesn't cause issues with the CLS metric.

Always reserve space in the layout for lazy-loaded and simplified components using placeholders. Pay special attention to removing components during scrolling.

Scrolling is not considered a user action for the CLS metric, so layout shifts at this time would be heavily penalized.

Tip: A specific example of CLS optimization using a placeholder can be found in the mini-case study on CLS optimization on the Datart homepage.

Conclusion

DOM is the skeleton, DOM is everything.

Nowhere else will you achieve as much optimization at once as you will here. Therefore, give it sufficient attention; it will certainly pay off.

An efficient DOM will increase content relevance, boost indexability chances via TTFB, and speed up your product. All of this will lead to higher conversions.